A Neural Network Training Method Based on Neuron Connection Coefficient Adjustments

A Neural Network Training Method Based on Neuron Connection Coefficient Adjustments

Kun Jiang

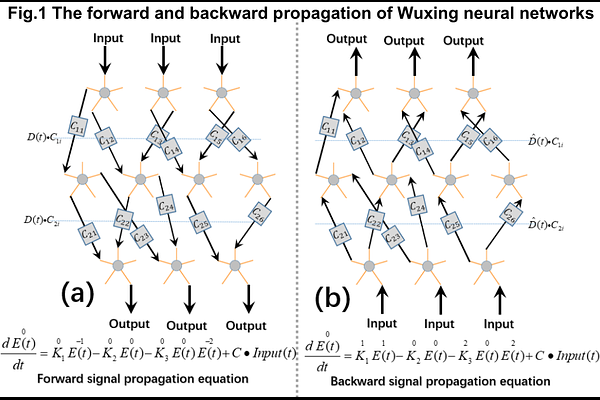

AbstractIn previous studies, we introduced a neural network framework based on symmetric differential equations, along with one of its training methods. In this article, we present another training approach for this neural network. This method leverages backward signal propagation and eliminates reliance on the traditional chain derivative rule, offering a high degree of biological interpretability. Unlike the previously introduced method, this approach does not require adjustments to the fixed points of the differential equations. Instead, it focuses solely on modifying the connection coefficients between neurons, closely resembling the training process of traditional multilayer perceptron (MLP) networks. By adopting a suitable adjustment strategy, this method effectively avoids certain potential local minima. To validate this approach, we tested it on the MNIST dataset and achieved promising results. Through further analysis, we identified certain limitations of the current neural network architecture and proposed measures for improvement.