Sparse Stimulus Generation Improves Reverse Correlation Efficiency and Interpretability

Sparse Stimulus Generation Improves Reverse Correlation Efficiency and Interpretability

Gargano, J. A.; Rice, A.; Chari, D. A.; Parrell, B.; Lammert, A. C.

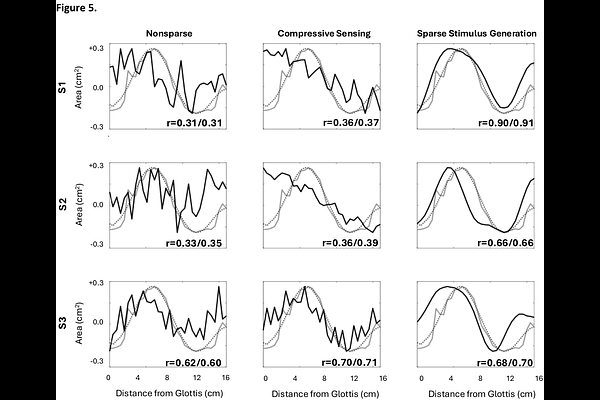

AbstractReverse correlation is a widely-used and well-established method for probing latent perceptual representations in which subjects render subjective preference responses to ambiguous stimuli. Stimuli are purposefully designed to have no direct relationship with the target representation (e.g., they are randomly-generated), a property which makes each individual stimulus minimally informative toward reconstructing the target, and often difficult to interpret for subjects. As a result, a large number of stimulus-response pairs must be gathered from a given subject in order for reconstructions to be of sufficient quality, making the task fatiguing. Recent work has demonstrated that the number of trials needed can be substantially reduced using a compressive sensing framework that incorporates the assumption that the target representation can be sparsely represented in some basis into the reconstruction process. Here, we introduce an alternative method that incorporates the sparsity assumption directly into stimulus generation, which holds promise not only for improving efficiency, but also for improving the interpretability of stimuli from subject's perspective. We develop this new method as a mathematical variation of the compressive sensing approach, before conducting one simulation study and two human subjects experiments to assess the benefits of this method to reconstruction quality, sample size efficiency, and subjective interpretability. Results show that sparse stimulus generation improves all three of these areas relative to conventional reverse correlation approaches, and also relative to compressive sensing in most conditions.